Kubernetes hasn’t been replaced by AI, but it’s being redefined by it. Anxiety is the prelude to rebirth.

After attending KubeCon EU 2026 in Amsterdam, I’ve been pondering a key question: Kubernetes isn’t obsolete, but it’s no longer “enough”; it hasn’t been replaced by AI, but it’s being redefined by AI.

This was my third time attending KubeCon in Europe. Over the past few years, you can actually see the community’s mindset shift through the event slogans:

2024 Paris: La vie en Cloud Native

→ Cloud Native has become a “way of life,” the default state

2025 London: No slogan, just the 10th anniversary

→ Kubernetes reached a milestone, focusing on retrospection rather than moving forward

2026 Amsterdam: Keep Cloud Native Moving

→ But the question is: where is it moving?

The absence of a slogan in 2025 was a signal in itself:

When an ecosystem starts commemorating the past instead of defining the future, it’s already at an inflection point.

This article doesn’t recap the talks, but instead distills my observations at KubeCon into insights about Kubernetes’ anxiety and rebirth in the AI wave.

The Root of Anxiety: Is Kubernetes Facing a “Crisis”?

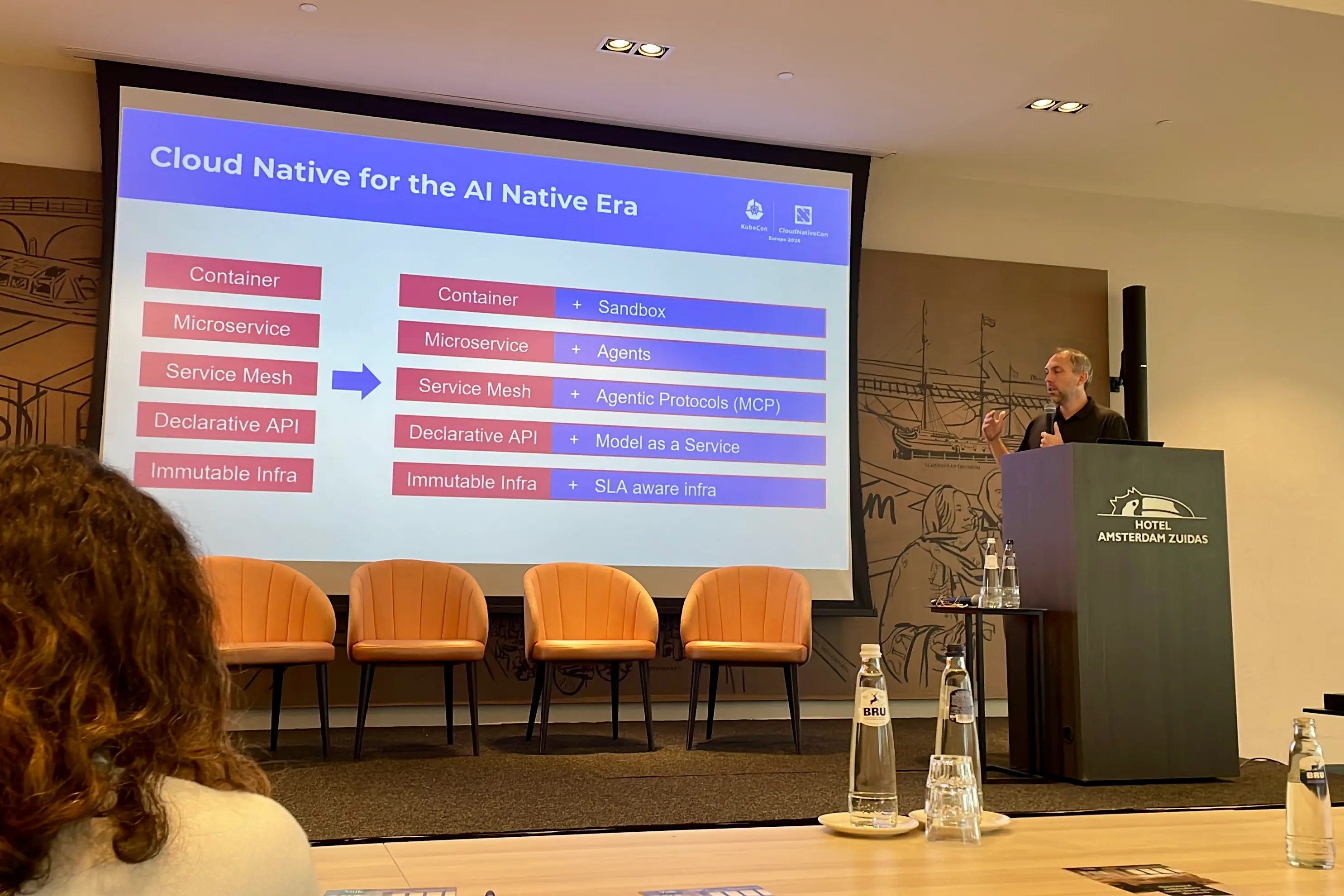

The biggest change at KubeCon was that AI has completely replaced traditional cloud native topics. The focus shifted from service optimization and microservices management to how to deploy and manage AI workloads on Kubernetes, especially inference tasks and GPU scheduling.

Kubernetes, as the foundational infrastructure, was once the core of the cloud native world. With the explosive growth of AI models, the question now is whether Kubernetes can still serve as a “universal” platform for everything, which has become a new source of anxiety.

The AI boom brings real challenges: Can Kubernetes’ “universality” adapt to the complexity of AI workloads?

The Focus Brought by the AI Boom

AI’s popularity has shifted the cloud native spotlight entirely to artificial intelligence. AI coding, OpenClaw, large language models, and generative models have all drawn widespread attention. AI has become the core computing demand in the real world.

This surge in demand raises the question: Can Kubernetes continue to serve as the infrastructure platform for complex tasks? Especially with issues like GPU sharing, inference model scheduling, VRAM allocation, and device attribute selection, is the traditional Kubernetes resource model sufficient?

In the past, Kubernetes handled compute, storage, and networking as foundational infrastructure. But with the rapid development of AI, its “universality” is being challenged. Particularly for inference tasks, Kubernetes’ model appears thin.

Comparing with OpenStack: Will Kubernetes Repeat History?

OpenStack once aimed to be a complete open-source cloud platform, but ultimately failed to sustain growth due to complexity and a lack of flexibility in adapting to new technologies.

Will Kubernetes follow the same path? I believe Kubernetes has different strengths: as a container and microservices orchestration platform, it’s widely adopted and has strong community and vendor support. It doesn’t try to replace all cloud provider capabilities but serves as an infrastructure control plane to help users manage resources.

However, as AI workloads become mainstream, Kubernetes must find a new position to avoid being replaced by “AI-optimized platforms.”

Kubernetes’ Challenge: The GPU Resource Management Gap

At KubeCon, NVIDIA announced the donation of the GPU DRA (Dynamic Resource Allocation) driver to the CNCF, marking the upstreaming of GPU resource management. GPU sharing and scheduling have become urgent issues for Kubernetes.

Traditionally, Kubernetes relied on the Device Plugin model to schedule GPUs, only supporting allocation by device count (e.g., nvidia.com/gpu: 1). But for AI inference tasks, more information is needed for resource scheduling, such as VRAM size, GPU topology, and sharing strategies. NVIDIA DRA makes GPU resource management more flexible and intelligent, gradually easing the “GPU resource crunch” in AI workloads.

This shift means Kubernetes is no longer just a “container orchestration platform,” but is becoming the infrastructure layer for AI-specific resource scheduling.

Against this backdrop, both the community and industry are exploring finer-grained GPU resource abstraction and scheduling mechanisms. For example, the open-source project HAMi is building a GPU resource management layer for AI workloads on top of Kubernetes, supporting GPU sharing, VRAM-level allocation, and heterogeneous device scheduling.

These efforts are not about replacing Kubernetes, but about filling the resource model gaps for the AI era. In the long run, this layer may evolve into a “GPU Abstraction Layer” similar to CNI/CSI, becoming a key part of AI-native infrastructure.

The Production “Gap”: Many AI PoCs, Few in Production

A common post-event summary was: Many PoCs, but “everyday production deployments” are still rare. Pulumi summarized it as:

lots of working demos, very few production setups people trust

This shows that while many AI workload solutions succeed in technical demos, the transition from experimentation to production remains difficult. Whether it’s GPU resource sharing or inference request scheduling, whether Kubernetes as the foundation can support this transformation is still an open question.

The Rise of Inference Systems: Kubernetes’ Scheduling Boundaries Are Challenged

Another major event at this KubeCon was llm-d being contributed to the CNCF as a Sandbox project.

If GPU DRA represents the upstreaming of device resource models, then llm-d represents another critical evolution: Distributed LLM inference capabilities are moving from proprietary engineering implementations to standardized, community-driven collaboration in cloud native.

This is significant not just because it’s another open-source project, but because it shows that Kubernetes’ challenges in the AI era are no longer just about “how to schedule GPUs,” but also “how to host inference systems themselves.” As prefill/decode separation, request routing, KV cache management, and throughput optimization move into the infrastructure layer, Kubernetes’ boundaries are being redefined.

Traditionally, the Kubernetes scheduler focused on Pod scheduling. But in AI inference scenarios, scheduling is not just about picking a node—it’s about selecting the most suitable inference instance based on request characteristics. Factors like model state, request queue depth, and cache hit rate all need to be considered. This process is increasingly managed by inference runtimes, forming new “request-level scheduling” systems.

This leads to an overlap between the Kubernetes scheduler and inference systems, forcing Kubernetes to rethink its role: should it keep expanding, or collaborate with inference systems?

AI-Native Infrastructure: The Key Challenge for Production

At the AI Native Summit, the real needs for AI-native infrastructure were especially clear. The focus was no longer “can it run on Kubernetes,” but how to make AI workloads routine, stable, and production-ready on Kubernetes.

The core challenge is delivery. Unlike traditional apps, AI model weights are often huge—tens of GB or even TB—making model delivery and data management extremely complex. Traditional container delivery systems (like image layers) struggle with such massive data and complex versioning.

A key direction for Kubernetes is to standardize model weight and data delivery, using ImageVolume and OCI artifacts to solve AI model delivery and version management on Kubernetes. This not only reduces “cold start” times but also provides infrastructure support for multi-tenancy and compliance.

Summary

Kubernetes won’t be replaced by AI, but it’s being reshaped as the core of infrastructure. This anxiety is the force driving its evolution—it’s moving from a “general-purpose infrastructure platform” to an “AI-powered multifunctional base”. Some even call it the AI operating system.

In the future, Kubernetes’ core competitiveness will no longer be just container management, but how effectively it can schedule and manage AI workloads, and how it can make AI a routine part of operations. This was my biggest takeaway from the AI Native Summit and KubeCon, and it’s what I look forward to in the Kubernetes ecosystem over the next few years.